Stop me if you’ve heard this:

Now with self-driving cars, engineers will be faced with dilemmas. They will have to decide the answers to certain contentious questions in moral philosophy. For example, should a car go straight and hit the child, or divert and hit the man? How should the software be programmed to behave? No longer can we just say, “it’s subjective, who knows.” We will actually have to make a decision.

Let me start by saying that I believe that questions of the trolley problem variety are interesting and important for philosophers to discuss. It may even be necessary to decide specific determinations for some contexts.

However, the idea that this applies to self-driving cars is nonsense.

1. More code is bad

I want to explain a perspective that many software engineers have, but I cannot take for granted that everyone knows. The perspective is that code is basically bad. Which is to say, if you have a way of implementing something, one way that uses more code, and another way that uses less code, the way that uses less code is probably better. This is because, the more code, the more difficult it is to maintain the project, and opportunities for introducing bugs.

Therefore, if you have a bit of extra code that says, “here’s how the car should behave in this certain ethically interesting situation,” then you are introducing a liability. I will elaborate more on this later.

2. Oft-cited “Trolley Problem” moral dilemmas are extremely rare for cars.

How many times in your life have you ever had to choose between killing the old man versus swerving and killing the cancer patient? Probably the vast majority of cars (more than 99.9%) will never encounter situations like that. Why doesn’t the car just stop? Are the brakes failing? Why can’t the car just swerve out of the way of all of the people? Is the car in some sort of narrow corridor? It is such a highly specific contrivance of circumstances that it’s on par with a lightning strike.

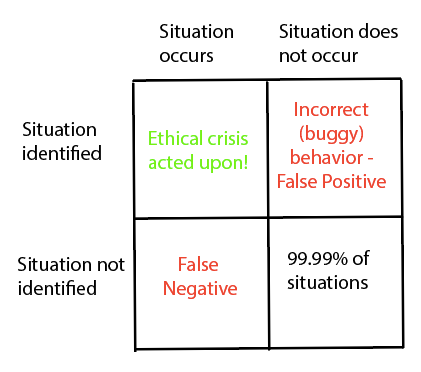

Point #1 and point #2, taken together and stated formally, imply that hard-coding specifically how cars should handle obscure moral dilemmas will result in high rates of type I errors – false positive identification of dilemmas, i.e., the car acting “morally” when that shouldn’t apply.

If ethical dilemmas are common, then you will want to make sure the software can always identify them. If ethical dilemmas are very rare (as I argue), then it’s better to tell the car to just behave how it does in 99.99% of situations, at the expense of “correct” behavior in ethical dilemmas.

Let me add that I think the types of “bugs” we are risking are really, really bad. If we program in something like, “the life of [this kind of person] is more valuable than the life of [that kind of person], and then that assumption manifests incorrectly in the behavior of the car… that’s bad. Suffice to say, it’s highly undesirable to hard-code those types of prejudices into mechanical robots (which is what a self-driving car is). You shouldn’t do anything like that, unless you absolutely have to.

If I was making the above argument in real time, I imagine myself getting interrupted with this response:

But that argument is a cop-out! You can’t just say that you’re not going to deal with an issue just because “it’s rare.” To talk about rarity is dodging the issue. If the car DOES get into that situation, even hypothetically, it still has to do SOMETHING. It is impossible to avoid telling the car what to do, because whatever code you write, it will result in some behavior on the part of the car. So the car will necessarily be programmed to do something, if even implicitly.

The problem that I have with this argument is the word “programmed.” It is important to emphasize that self-driving cars use machine learning, virtually without exception. So, strictly speaking, the vast majority of the car behavior is trained, not programmed.

I’m not saying that everyone has this misconception… but a whole bunch of people who I see talking about this issue speak as if we are dealing with a piece of software where humans need to code in all the decisions about what the car should do at all times.

Roughly speaking, the way you train an ML model for Autonomous Vehicles (from here on, “AVs”) is: you give the model examples of good human driving as training data, and then you tell the software “drive the car like those examples, minus the imperfections.”

Of course, it varies. Some AV software products rely more heavily on human-written logic than other AV software products. Indeed, sometimes there is a heck of a lot of human-written logic in the mix.

But, I would argue, even for the more human-coded software products, there is a limit to the number of possible situations we can require human coders to explicitly decide on. We simply cannot make human coders explicitly decide every possible situation (up to and including ethically ambiguous situations). If we needed that, AV software could never be made, because there are way more situations than we have man-hours to code.

Any AV software product needs to decide what is hard-coded vs what is inferred by ML from training data. The oft-discussed ethically ambiguous “philosophy” situations are so esoteric that they fall into the category of, “we’ll just use the ML to infer what to do.”

Sure, you can use ML to handle the fine-grained specifics of behavior, and identify the nature of the situation in the course of driving. But we should still use humans to describe general rules, i.e., traffic laws, and how to handle edge cases. Isn’t that the best way to do it?

This is the best rebuttal to my argument, because it implies that we still need humans to specify edge case behavior.

But there is a cutoff. How “edge” are we talking here? There are theoretically an infinite number of possible edge situations. Keep it simple and input a limited number of heuristics. Let AI handle the rest.

Admittedly, that approach *is technically* inputting an answer to trolley problem dilemmas: default to basic heuristics and follow rules, like traffic laws, which may not be optimal in edge cases.

I still think that’s the right approach, though. The key thing here is, we do not need to “solve” everything on a philosophical level. We only need to solve these problems on a practical level (essentially, not solving them. We don’t need perfection.)

But if we’re using training data, why not at least include ethical dilemmas as some of the examples?

If ethical dilemmas happen to crop up in the good-driving examples you accumulate normally, there’s no harm in that. But I would caution against specifically contriving these example-situations for use as training data. We want training data to be typical representations (even in the case of accidents). By including contrived or non-typical situations, you’re weighting the car towards unusual behavior.

But how can you leave something as important as ethics in the hands of machine learning? We can’t let a black box make these kinds of important ethical decisions!

I at least respect the other hypothetical rebuttals I’ve considered. This rebuttal, I do not respect that much.

Eliciting fear with the term “black box” reminds me of EU regulation:

I would be surprised, but remember this is coming from the same people who are the reason you have to install the browser extension that blocks the “we use cookies” popups on every website.

I know this is uncharitable, but I basically attribute this take to typical ignorance-driven fear of technology. We have been entrusting our lives to machines for as long as we’ve had tools. The “safest” option won’t necessarily contort to what you think makes things safe traditionally.

Remember, it’s all about relative advantages. AV software doesn’t need to be perfect, it just needs to be better than humans by a sizable margin. An AV definitely could make the “wrong” decision on a morally ambiguous situation. But the very fact that these situations are morally ambiguous means that either decision is something that a non-negligible number of humans would both make and defend. We’ve come full circle; we really can use as a defense, “it’s subjective!” It’s bad if the car doesn’t know what to do, but even humans do badly in these situations (because there’s disagreement!)

Finishing thoughts

Whenever I think about this issue, I remember a website I frequented in high school: nationstates.net. The website gives you certain “dilemmas” where you can pick a policy as part of creating a nation. Always one of the first dilemmas the website would ask concerned autonomous vehicles, described here. I always picked option #3:

“This whole debate is ridiculous,” says @@RANDOMNAME@@, a software engineer who is fixing the fonts on your computer. “To be honest, these schoolbus scenarios sound like something dreamed up by a Philosophy 101 professor, not something that will actually happen in real life. It’s not the place of software to weigh the value of human life! Just program them to follow the road rules at all times!”

@@RANDOMNAME@@’s answer always rang true to me for its pragmatism.

Honestly, the idea of employees from big tech companies calling up moral philosophers to make decisions on the behaviors of RVs sounds like a total shit show. Moral philosophers love to think up extremely contrived situations. They do so because, in the effort to get to “ground truth”, they don’t care how contrived a thought experiment situation is, as long as it clarifies some question. (I’m no philosopher, but see some of my own thought experiments). Engineers are playing a whole different game. They are trying to design products that are reliable and standard. Engineers shouldn’t let themselves be talked into embedding some utility for determining whose life is most valuable.